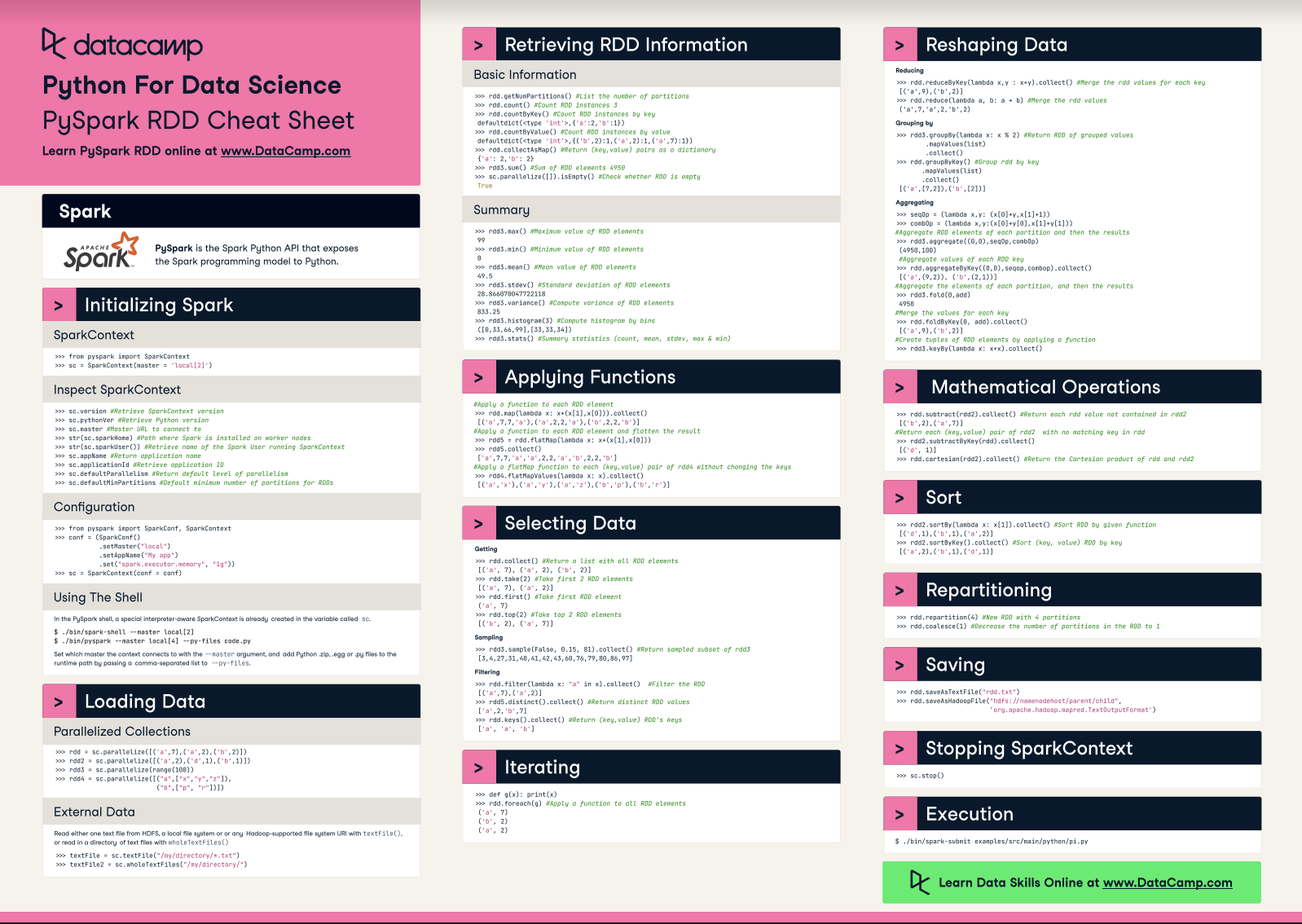

PySpark Cheat Sheet: Spark in Python

This PySpark cheat sheet with code samples covers the basics like initializing Spark in Python, loading data, sorting, and repartitioning.

Jul 2021 · 6 min read

RelatedSee MoreSee More

tutorial

Encapsulation in Python Object-Oriented Programming: A Comprehensive Guide

Learn the fundamentals of implementing encapsulation in Python object-oriented programming.

Bex Tuychiev

11 min

tutorial

Everything You Need to Know About Python Environment Variables

Learn the ins and outs of managing Python environment variables with os and python-dotenv libraries.

Bex Tuychiev

9 min

tutorial

Snowflake Snowpark: A Comprehensive Introduction

Take the first steps to master in-database machine learning using Snowflake Snowpark.

Bex Tuychiev

19 min

tutorial

Everything You Need to Know About Python's Maximum Integer Value

Explore Python's maximum integer value, including system limits and the sys.maxsize attribute.

Amberle McKee

5 min

tutorial

Python KeyError Exceptions and How to Fix Them

Learn key techniques such as exception handling and error prevention to handle the KeyError exception in Python effectively.

Javier Canales Luna

6 min

tutorial

Troubleshooting The No module named 'sklearn' Error Message in Python

Learn how to quickly fix the ModuleNotFoundError: No module named 'sklearn' exception with our detailed, easy-to-follow online guide.

Amberle McKee

5 min