course

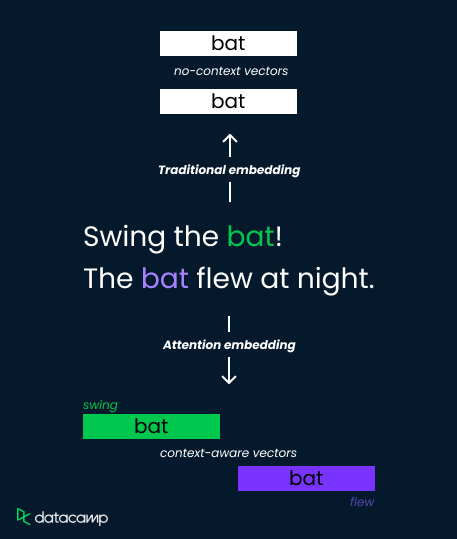

Attention Mechanism in LLMs: An Intuitive Explanation

Learn how the attention mechanism works and how it revolutionized natural language processing (NLP).

Apr 2024 · 8 min read

Get started with Deep Learning!

4 hours

20K

course

Working with Hugging Face

4 hours

2.4K

course

Introduction to LLMs in Python

4 hours

6.5K

See More

RelatedSee MoreSee More

blog

What is an LLM? A Guide on Large Language Models and How They Work

Read this article to discover the basics of large language models, the key technology that is powering the current AI revolution

Javier Canales Luna

12 min

blog

Understanding and Mitigating Bias in Large Language Models (LLMs)

Dive into a comprehensive walk-through on understanding bias in LLMs, the impact it causes, and how to mitigate it to ensure trust and fairness.

Nisha Arya Ahmed

12 min

tutorial

Natural Language Processing Tutorial

Learn what natural language processing (NLP) is and discover its real-world application, using Google BERT to process text datasets.

DataCamp Team

13 min

tutorial

An Introductory Guide to Fine-Tuning LLMs

Fine-tuning Large Language Models (LLMs) has revolutionized Natural Language Processing (NLP), offering unprecedented capabilities in tasks like language translation, sentiment analysis, and text generation. This transformative approach leverages pre-trained models like GPT-2, enhancing their performance on specific domains through the fine-tuning process.

Josep Ferrer

12 min

tutorial

How to Train a LLM with PyTorch

Master the process of training large language models using PyTorch, from initial setup to final implementation.

Zoumana Keita

8 min

code-along

Evaluating LLM Responses

In this session, we cover the different evaluations that are useful for reducing hallucination and improving retrieval quality of LLMs.

Josh Reini