course

Data Demystified: What is A/B Testing?

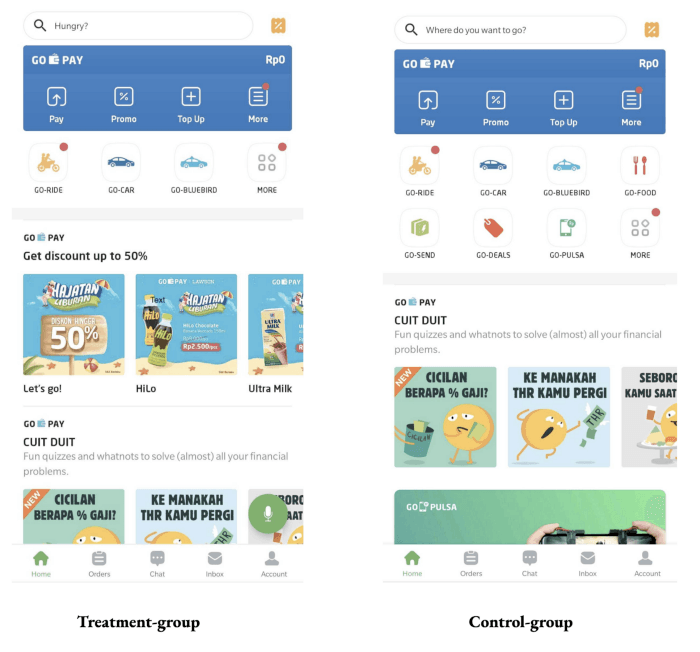

In part seven of data demystified, we’ll break down one of the most common use cases of statistical analysis in data science: A/B testing.

Sep 2022 · 10 min read

Topics

Data Literacy Courses

4 hours

69.1K

course

Understanding Data Science

2 hours

609.1K

course

Understanding Data Visualization

2 hours

180.2K

See More

RelatedSee MoreSee More

blog

Data Demystified: An Overview of Descriptive Statistics

In the fifth entry of data demystified, we provide an overview of the basics of descriptive statistics, one of the fundamental areas of data science.

Richie Cotton

6 min

blog

Data Demystified: Data Visualizations that Capture Trends

In part eight of data demystified, we’ll dive deep into the world of data visualization, starting off with visualizations that capture trends.

Richie Cotton

10 min

blog

Data Demystified: Quantitative vs. Qualitative Data

In the second entry of data demystified, we’ll take a look at the two most common data types: Quantitative vs Qualitative Data. For more data demystified blogs, check out the first entry in the series.

Richie Cotton

5 min

blog

Data Demystified: What Exactly is Data?

Welcome to Data Demystified! A blog-series breaking down key concepts everyone should know about in data. In the first entry of the series, we’ll answer the most basic question of them all, what exactly is data?

Richie Cotton

4 min

podcast

Make Your A/B Testing More Effective and Efficient

Anjali Mehra, Senior Director of Product Analytics at DocuSign, discusses the role of A/B testing in data experimentation and how it can impact an organization.

Richie Cotton

50 min

code-along

A/B Testing in R

Compare the performance of two groups with this introduction to A/B testing in R

Arne Warnke