course

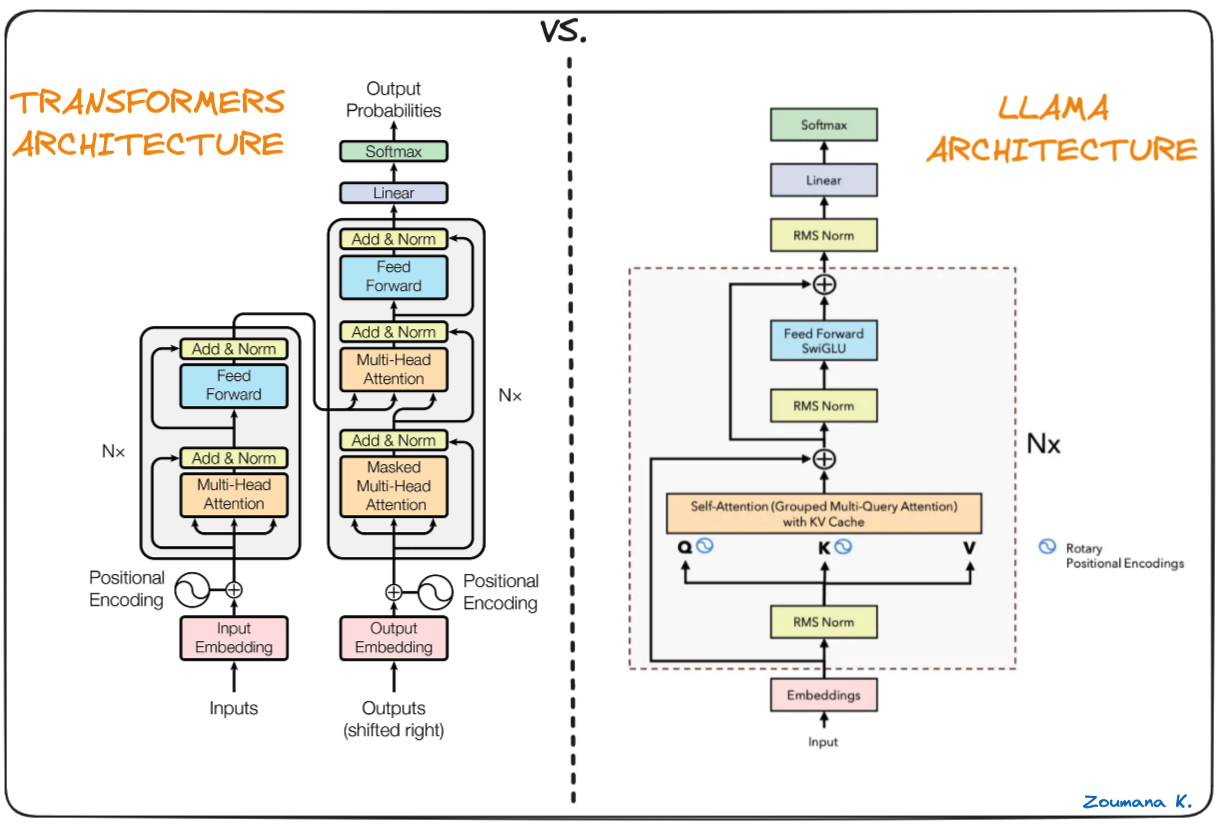

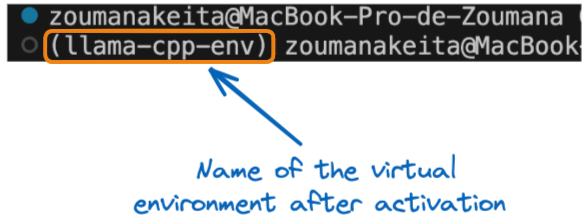

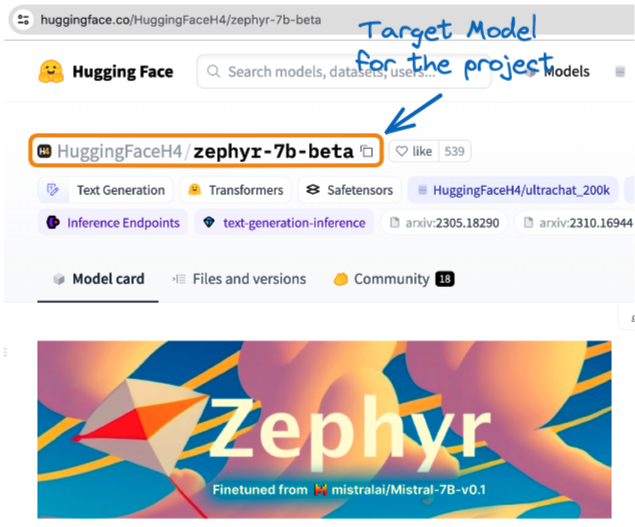

Llama.cpp Tutorial: A Complete Guide to Efficient LLM Inference and Implementation

This comprehensive guide on Llama.cpp will navigate you through the essentials of setting up your development environment, understanding its core functionalities, and leveraging its capabilities to solve real-world use cases.

Nov 2023 · 11 min read

Develop AI Applications

Learn to build AI applications using the OpenAI API.

Start Your AI Journey Today!

2 hours

25.6K

track

AI Fundamentals

10hrs hours

course

AI Ethics

1 hour

9.1K

See More

RelatedSee MoreSee More

tutorial

How to Run Llama 3 Locally: A Complete Guide

Run LLaMA 3 locally with GPT4ALL and Ollama, and integrate it into VSCode. Then, build a Q&A retrieval system using Langchain, Chroma DB, and Ollama.

Abid Ali Awan

15 min

tutorial

Fine-Tuning LLaMA 2: A Step-by-Step Guide to Customizing the Large Language Model

Learn how to fine-tune Llama-2 on Colab using new techniques to overcome memory and computing limitations to make open-source large language models more accessible.

Abid Ali Awan

12 min

tutorial

Fine-Tuning Llama 3 and Using It Locally: A Step-by-Step Guide

We'll fine-tune Llama 3 on a dataset of patient-doctor conversations, creating a model tailored for medical dialogue. After merging, converting, and quantizing the model, it will be ready for private local use via the Jan application.

Abid Ali Awan

19 min

tutorial

Run LLMs Locally: 7 Simple Methods

Run LLMs locally (Windows, macOS, Linux) by leveraging these easy-to-use LLM frameworks: GPT4All, LM Studio, Jan, llama.cpp, llamafile, Ollama, and NextChat.

Abid Ali Awan

14 min

tutorial

LlamaIndex: Adding Personal Data to LLMs

LlamaIndex is your friendly data sidekick for building LLM-based apps. You can easily ingest, manage, and retrieve both private and domain-specific data using natural language.

Abid Ali Awan

10 min

code-along

Fine-Tuning Your Own Llama 2 Model

In this session, we take a step-by-step approach to fine-tune a Llama 2 model on a custom dataset.

Maxime Labonne